Table of Contents

Artificial intelligence in healthcare is no longer a future possibility. It is happening now, at scale, across every type of healthcare organization.

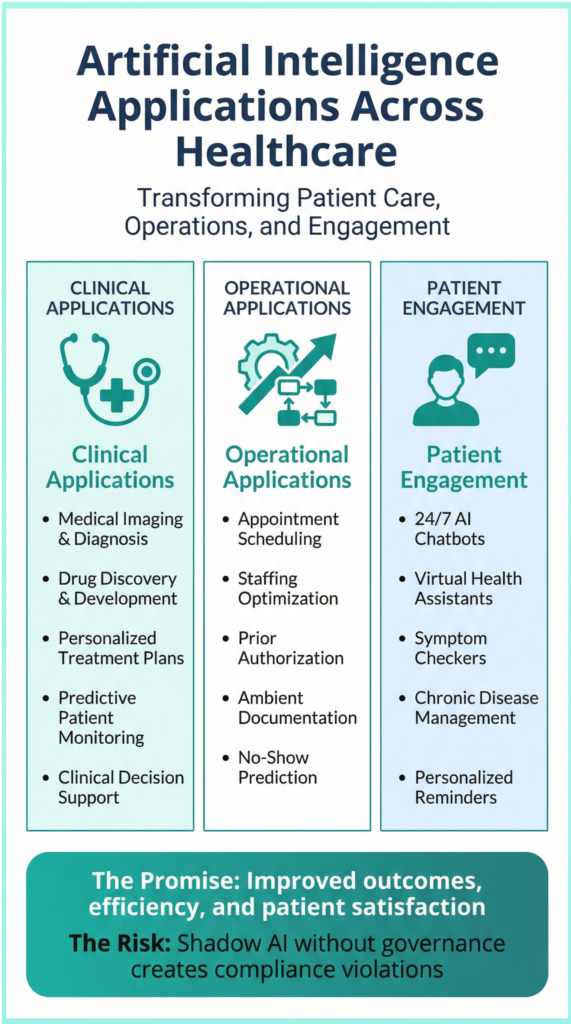

AI is diagnosing diseases from medical images with accuracy that rivals radiologists. AI is discovering new drug candidates in months instead of years. AI is personalizing treatment plans based on genetic profiles and clinical history. AI is automating administrative tasks that consume hours of staff time. AI is answering patient questions, scheduling appointments, and predicting no-shows.

The benefits are real and compelling. Research shows that properly implemented AI can reduce medical errors, improve diagnostic accuracy, accelerate drug discovery, enhance patient engagement, and free clinicians to focus on patient care rather than documentation. Healthcare organizations that adopt AI strategically are gaining operational advantages and improving patient outcomes.

Artificial intelligence in healthcare is evolving rapidly, making significant contributions to various medical fields.

From diagnostic assistance to treatment personalization, artificial intelligence in healthcare is proving its worth.

Moreover, artificial intelligence in healthcare is streamlining operations and enhancing patient interaction.

The momentum is undeniable. The global artificial intelligence in healthcare market is projected to reach $110 billion by 2030, growing at more than 38.6 percent annually. Major health systems are announcing AI initiatives. Vendors are flooding the market with AI-powered tools. Boards and executives are demanding innovation. The message is clear: adopt AI or fall behind.

It is essential for organizations to recognize the role of artificial intelligence in healthcare in their strategic planning.

But there is a hidden risk in this rapid adoption. A risk that most healthcare organizations are not aware of until it creates compliance violations, security breaches, or patient safety incidents.

That risk is Shadow AI.

While leadership announces strategic artificial intelligence in healthcare initiatives and IT evaluates enterprise AI platforms, staff across the organization are quietly adopting AI tools on their own. Contact center agents are using ChatGPT to draft patient communications. Schedulers are using AI chatbots to answer appointment questions. Clinicians are using ambient documentation tools to transcribe encounters. Marketing teams are using generative AI to create content. Every user has good intentions—but collectively, they are creating a compliance nightmare.

By leveraging artificial intelligence in healthcare, clinicians can provide better patient outcomes and optimize workflow efficiency.

Artificial intelligence in healthcare can automate mundane tasks, allowing healthcare professionals to focus on patient care.

The promise of artificial intelligence in healthcare is becoming a reality as technologies advance.

Integrating artificial intelligence in healthcare systems can significantly reduce operational costs while improving service delivery.

As the industry evolves, understanding artificial intelligence in healthcare will become crucial for future success.

Healthcare organizations must embrace artificial intelligence in healthcare to stay competitive.

This is Shadow AI—and it is proliferating across healthcare organizations at an alarming rate.

The integration of artificial intelligence in healthcare presents both opportunities and challenges.

Understanding Shadow AI in the context of artificial intelligence in healthcare is vital for mitigating risks.

Artificial intelligence in healthcare can lead to better decision-making and enhance patient safety.

With careful implementation, artificial intelligence in healthcare can greatly improve operational efficiency.

The potential of artificial intelligence in healthcare should be harnessed with governance and oversight.

Shadow AI can undermine the benefits of artificial intelligence in healthcare if not properly managed.

Healthcare systems that embrace artificial intelligence in healthcare will likely outperform their competitors.

As artificial intelligence in healthcare continues to evolve, so must the frameworks for its governance.

Ultimately, the responsible use of artificial intelligence in healthcare will lead to improved patient trust and engagement.

Success with artificial intelligence in healthcare requires a commitment to ethical practices and patient safety.

Healthcare leaders must prioritize artificial intelligence in healthcare to leverage its full potential for patient care.

Effective communication regarding artificial intelligence in healthcare can ease staff concerns and resistance.

Educating staff about the benefits of artificial intelligence in healthcare can foster a positive environment for innovation.

Leaders must advocate for the role of artificial intelligence in healthcare in their strategic frameworks.

Staff training on artificial intelligence in healthcare tools should be a priority to minimize risks.

Stakeholders can leverage artificial intelligence in healthcare to enhance their decision-making processes.

Healthcare organizations can benefit from artificial intelligence in healthcare by improving operational efficiencies.

Investing in artificial intelligence in healthcare will yield long-term benefits for patient care.

Healthcare organizations can create value through the strategic application of artificial intelligence in healthcare.

By adopting artificial intelligence in healthcare, organizations can enhance patient-centered care models.

Monitoring the impacts of artificial intelligence in healthcare will be critical for ongoing success.

Artificial intelligence in healthcare provides new opportunities for enhancing service delivery and patient care.

As artificial intelligence in healthcare evolves, so too must the strategies for its ethical use.

Healthcare stakeholders must ensure that artificial intelligence in healthcare is applied judiciously.

The Promise of Artificial Intelligence in Healthcare

Before we address the hidden risk, it is important to understand why AI adoption is accelerating so rapidly in healthcare.

Leveraging artificial intelligence in healthcare responsibly will foster trust among patients and providers.

The integration of artificial intelligence in healthcare can lead to groundbreaking advancements in medical science.

Addressing concerns surrounding artificial intelligence in healthcare is essential for its successful implementation.

Clinical applications of AI are transforming patient care. Artificial intelligence in healthcare algorithms analyze medical images to detect cancer, fractures, and other conditions with accuracy that matches or exceeds human radiologists. Artificial intelligence in healthcare predicts patient deterioration hours before clinical symptoms appear, enabling earlier intervention. Artificial intelligence in healthcare identifies patients at high risk for readmission, allowing care teams to provide targeted support. Artificial intelligence in healthcare personalizes treatment recommendations based on genetic profiles, clinical history, and real-world evidence.

Operational applications of AI are improving efficiency. Artificial intelligence in healthcare automates appointment scheduling, reducing call volume and wait times. Artificial intelligence in healthcare optimizes staffing levels based on predicted patient demand. Artificial intelligence in healthcare streamlines prior authorization processes that delay care. Artificial intelligence in healthcare generates clinical documentation from ambient listening, reducing physician documentation burden. AI predicts no-shows and automates outreach to reduce missed appointments.

Patient engagement applications of AI are enhancing access. AI-powered chatbots answer patient questions 24/7, providing instant responses without wait times. AI virtual assistants help patients manage chronic conditions like diabetes and hypertension. AI symptom checkers guide patients to appropriate care settings. AI sends personalized appointment reminders and follow-up instructions.

Healthcare organizations must prioritize transparency in their use of artificial intelligence in healthcare applications.

Ultimately, the ethical application of artificial intelligence in healthcare will define its future trajectory.

Engaging patients about the benefits of artificial intelligence in healthcare can enhance their experiences and trust.

Transparency in the use of artificial intelligence in healthcare is vital for maintaining patient trust.

Healthcare providers must navigate the ethical complexities associated with artificial intelligence in healthcare.

Investing in the right tools will enhance artificial intelligence in healthcare initiatives.

The benefits are measurable. Organizations that implement AI successfully report reduced diagnostic errors, faster treatment initiation, improved patient satisfaction, decreased staff burnout, and significant cost savings.

The adoption pressure is intense. Healthcare organizations face mounting pressure from multiple directions. Boards demand innovation and competitive positioning. Patients expect digital experiences comparable to other industries. Staff need relief from administrative burden and burnout. Competitors are announcing artificial intelligence in healthcare initiatives. Vendors are pitching artificial intelligence in healthcare solutions daily.

The result: healthcare organizations are adopting AI rapidly—often without adequate governance frameworks to manage risk.

The Hidden Risk: Shadow AI in Healthcare Organizations

Shadow AI refers to artificial intelligence tools adopted by staff without formal IT approval, compliance review, or organizational oversight. It is the AI equivalent of Shadow IT—the use of unauthorized software and cloud services that has challenged IT departments for years.

Shadow AI is different from strategic artificial intelligence in healthcare initiatives. When leadership announces an artificial intelligence in healthcare initiative, there is a formal evaluation process. IT assesses integration requirements. Compliance reviews HIPAA implications. Contracts are negotiated. Implementation is planned. Governance is established.

Shadow AI bypasses all of this. Staff discover AI tools through online searches, peer recommendations, or vendor outreach. They start using them immediately because the tools are free or low-cost, easy to adopt, and promise instant productivity gains. No approval is requested. No compliance review occurs. No integration planning happens.

The result: healthcare organizations have 5-15 AI tools in use that leadership does not know about, IT has not evaluated, and compliance has not approved.

Common Examples of Shadow AI in Healthcare

Generative AI tools like ChatGPT, Google Gemini, and Claude are being used to draft patient communications, summarize clinical notes, answer medical questions, and create content. Staff input patient information without realizing these tools may store data on vendor servers, use it to train AI models, or transmit it without encryption.

Artificial intelligence in healthcare chatbots are being integrated into websites and patient portals by contact center staff to handle appointment scheduling and patient inquiries. These chatbots often lack integration with EHR systems, have no clinical validation, and provide no audit trail when they give incorrect information.

Ambient documentation tools are being used by clinicians to transcribe patient encounters and generate clinical notes. Many of these tools record conversations and store them on third-party servers without Business Associate Agreements, creating HIPAA violations.

AI scheduling assistants are being used to optimize appointment calendars and send automated reminders. These tools often lack integration with contact center platforms and EHR systems, creating manual workarounds and data inconsistencies.

Automated communication tools are being used to send appointment reminders, follow-up instructions, and patient education materials. When these tools generate content using AI without human review, they can send inaccurate or inappropriate information to patients.

AI-powered analytics tools are being used to analyze patient data, predict no-shows, and identify high-risk patients. These tools often process protected health information without proper security controls or compliance oversight.

These tools are often free or low-cost, easy to adopt, and promise immediate productivity gains. Staff discover them through online searches, peer recommendations, or vendor cold outreach. They start using them without asking permission.

Why Shadow AI Is Proliferating

Shadow AI is not a technology problem. It is a process problem. Healthcare staff are adopting AI tools without oversight for predictable reasons.

Pressure to innovate. Healthcare organizations are under intense pressure to adopt artificial intelligence. Leadership mandates innovation. Competitors announce AI initiatives. Vendors flood inboxes with promises of efficiency gains. Staff feel pressure to show they are adopting AI—even if formal approval processes do not exist.

Slow approval processes. Many healthcare organizations have slow, bureaucratic approval processes for new technology. IT reviews can take months. Compliance reviews add more time. Budget approvals require multiple stakeholders. Staff bypass these processes to move faster and solve problems now rather than waiting.

Lack of clear governance. Most healthcare organizations do not have formal AI governance policies. There is no clear process for evaluating AI tools. No defined criteria for approval. No designated decision-maker. In the absence of governance, staff make their own decisions about which AI tools to adopt.

Easy access to AI tools. AI tools are increasingly accessible. Many are free, including ChatGPT and Google Gemini. Others offer free trials or low-cost subscriptions. They require no IT involvement to start using. Staff can adopt AI tools in minutes without asking permission or involving IT.

Good intentions. Staff are not trying to create risk. They are trying to work more efficiently, serve patients better, and reduce burnout. They see AI as a solution to real problems—repetitive tasks, high call volume, documentation burden, scheduling bottlenecks. But good intentions do not eliminate risk.

The combination of innovation pressure, slow processes, absent governance, easy access, and good intentions creates the perfect conditions for Shadow AI proliferation.

The Risks of Shadow AI

Shadow AI creates significant risks for healthcare organizations across multiple dimensions.

1. HIPAA Compliance Violations

Many artificial intelligence in healthcare tools process patient data without proper safeguards. If staff input patient information into ChatGPT, Google Gemini, or other consumer AI tools, that data may be stored on vendor servers without Business Associate Agreements, used to train AI models in violation of patient privacy, transmitted without encryption in violation of HIPAA security requirements, or accessible to unauthorized users in violation of HIPAA access controls.

The consequence: HIPAA violations trigger regulatory investigations, fines, breach notification requirements, legal liability, and loss of patient trust. A single Shadow AI tool processing patient data without a BAA can result in hundreds of thousands of dollars in fines and significant reputational damage.

2. Data Security Breaches

AI tools that are not vetted for security may have vulnerabilities that expose patient data. If an AI tool is compromised by attackers, patient information could be stolen, leaked, or held for ransom. Healthcare organizations are responsible for protecting patient data even when staff use unauthorized tools. Breach notification requirements apply regardless of whether the organization knew about the tool.

3. Inaccurate or Harmful Outputs

AI tools can produce inaccurate, misleading, or harmful outputs. Generative AI is known to “hallucinate”—generating plausible-sounding but incorrect information. If staff rely on AI-generated content without verification, patients may receive incorrect medical information including wrong dosage, contraindications, or treatment advice, misleading appointment instructions including wrong location, time, or preparation requirements, or inappropriate communications including insensitive language or cultural misunderstandings.

The consequence: Patient harm, complaints, negative reviews, legal liability, and erosion of trust. Even if no physical harm occurs, inaccurate AI outputs damage the organization’s reputation and patient relationships.

4. Inconsistent Patient Experiences

When different departments use different AI tools without coordination, patients receive inconsistent experiences. One department uses an AI chatbot that provides instant answers. Another requires patients to call and wait on hold. One uses AI-generated appointment reminders with personalized instructions. Another sends generic manual emails. One has AI-assisted scheduling with real-time availability. Another requires multiple phone calls to find an appointment.

The consequence: Patient confusion, frustration, and erosion of trust. Patients expect consistent experiences across touchpoints. Shadow AI creates fragmentation that undermines patient satisfaction and loyalty.

5. Vendor Lock-In and Sprawl

When staff adopt AI tools independently, organizations end up with a proliferation of vendors, subscriptions, and integrations. This creates vendor sprawl with dozens of AI tools performing overlapping functions, integration complexity with tools that do not connect to EHR or contact center platforms, cost inefficiency from paying for multiple tools that could be consolidated, and support burden as IT must support tools they did not choose and may not understand.

The consequence: Wasted budget, operational inefficiency, and IT overwhelm. Organizations lose negotiating leverage with vendors and cannot optimize their technology stack strategically.

6. Loss of Organizational Control

Shadow AI undermines organizational control over technology strategy. Leadership cannot make informed decisions about AI adoption if they do not know what tools are being used. IT cannot ensure security and integration if they do not have visibility. Compliance cannot assess risk if they do not know what data is being processed. Operations cannot optimize workflows if AI tools are deployed inconsistently.

The consequence: Chaotic, uncoordinated AI adoption that creates risk and fails to deliver strategic value. Organizations lose the ability to adopt AI intentionally and strategically.

Real-World Shadow AI Scenarios

Scenario 1: The Chatbot Incident

A contact center agent, overwhelmed by repetitive patient questions about appointment scheduling, insurance coverage, and clinic locations, discovered an AI chatbot tool through an online search. The tool promised to handle basic questions automatically, freeing agents to focus on complex cases. The agent integrated it into the contact center website without IT approval or compliance review.

A contact center agent, overwhelmed by repetitive patient questions about appointment scheduling, insurance coverage, and clinic locations, discovered an AI chatbot tool through an online search. The tool promised to handle basic questions automatically, freeing agents to focus on complex cases. The agent integrated it into the contact center website without IT approval or compliance review.

Within two weeks, the chatbot gave a patient incorrect information about medication dosage. The patient, trusting the health system’s website, followed the advice and experienced an adverse reaction requiring emergency care.

The health system faced a lawsuit. When IT discovered the chatbot during the investigation, they found it had no integration with the EHR, no clinical validation, no audit trail of conversations, and no oversight mechanism. The agent had good intentions—reducing patient wait times and improving service—but created significant liability.

The outcome: The chatbot was immediately discontinued. The health system paid a settlement. The incident triggered a comprehensive Shadow AI audit that discovered eight additional unauthorized AI tools in use.

Scenario 2: The Documentation Tool

A physician, frustrated by documentation burden that consumed two hours every evening, started using an ambient AI tool to transcribe patient encounters. The tool recorded conversations during appointments, generated clinical notes automatically, and saved hours of documentation time. The physician was more present with patients, finished work on time, and felt less burned out.

The physician did not realize the tool was storing recordings on a third-party server without a Business Associate Agreement. The tool’s terms of service stated that recordings might be used to improve AI models—meaning patient conversations could be accessed by the vendor’s data science team.

When IT discovered the tool during a routine compliance audit, they found six months of patient conversations stored in violation of HIPAA. The health system had to notify affected patients, report the breach to HHS, conduct a risk assessment, and pay a significant fine.

The outcome: The tool was discontinued. The physician received compliance training. The health system implemented an AI governance policy to prevent future incidents.

Scenario 3: The Marketing Campaign

A marketing team, tasked with promoting the health system’s new patient portal, used generative AI to create social media content. The AI-generated posts included patient testimonials that were fabricated by the AI, clinical claims about portal benefits that were not verified, and images generated by AI without consideration of diversity or representation.

When patients and staff saw the posts, they raised concerns about authenticity and accuracy. Some recognized that the testimonials were not real. Others questioned whether the clinical claims were supported by evidence. The health system had to retract the content, apologize publicly, and review all AI-generated marketing materials.

The outcome: The damage to reputation was significant. The marketing team received training on AI risks and governance. The health system established approval requirements for AI-generated content.

How to Prevent Shadow AI: Building a Governance Framework

Establishing a culture of accountability related to artificial intelligence in healthcare will yield positive outcomes.

Shadow AI cannot be eliminated by prohibition. Staff will continue to adopt AI tools if those tools solve real problems and formal processes are too slow. The solution is not to ban AI—it is to build governance frameworks that enable safe experimentation while preventing risk.

Step 1: Establish an AI Governance Policy

Create a formal artificial intelligence in healthcare governance policy that defines what qualifies as AI, including tools that use machine learning, natural language processing, automated decision-making, or generative capabilities. Specify who can approve AI tools, whether that is the CMIO, CIO, AI steering committee, or another designated authority. Define what evaluation criteria apply, including compliance with HIPAA, security standards, integration requirements, vendor stability, and patient safety considerations. Establish what the approval process is, including request forms, review timelines, and decision criteria. Clarify what happens if AI is adopted without approval, including consequences and remediation processes.

The policy should be clear, accessible, and communicated to all staff. It should emphasize that governance exists to enable safe innovation, not to block progress.

Artificial intelligence in healthcare represents a pivotal shift in how we approach patient care.

Step 2: Conduct Shadow AI Discovery

Identify AI tools currently in use without approval. Survey staff across all departments to ask what AI tools they use and why. Review IT logs to identify unauthorized software and cloud services. Interview department leaders to understand what tools their teams have adopted. Monitor vendor outreach to track which AI vendors are contacting staff directly.

Create an inventory of Shadow AI tools, assess risk level for each tool, and prioritize remediation based on compliance and security risk.

Step 3: Evaluate and Consolidate Tools

For each Shadow AI tool discovered, conduct a structured evaluation. Assess compliance risk by determining whether the tool processes patient data, whether it has a Business Associate Agreement, and whether it meets HIPAA requirements. Assess security risk by evaluating whether the tool is secure, whether it has known vulnerabilities, and whether data is encrypted. Assess integration by determining whether the tool connects to EHR or contact center platforms or creates manual workarounds. Assess value by understanding whether the tool solves a real problem and whether better alternatives exist.

Based on this evaluation, decide to approve the tool if it meets criteria and can be formalized with proper safeguards, replace the tool if it has value but better alternatives exist, or discontinue the tool if it creates unacceptable risk and must be stopped immediately.

Step 4: Create an Approved AI Tools List

Develop a list of pre-approved AI tools that staff can use without individual approval. Include generative AI tools approved for drafting content with clear restrictions on patient data input. Include scheduling tools that are integrated with EHR systems and meet security requirements. Include chatbots that are approved for patient-facing use with clinical validation and oversight. Include documentation tools that have Business Associate Agreements and proper security controls.

Make the list easily accessible through the intranet or staff portal and update it regularly as new tools are evaluated and approved.

Step 5: Streamline the Approval Process

Make it easy for staff to request approval for new AI tools. Create a simple request form that captures tool name, use case, vendor information, and data to be processed. Establish a fast review timeline of two to four weeks, not months. Define clear decision criteria covering compliance, security, integration, and value. Provide transparent communication that explains approval or denial reasons and suggests alternatives when tools are denied.

The goal is to make formal approval faster and easier than Shadow AI adoption. If the approval process is slow and bureaucratic, staff will continue to bypass it.

Step 6: Educate Staff on AI Risks

Conduct training on AI risks and governance requirements. Cover HIPAA compliance requirements for AI tools, including the need for Business Associate Agreements and proper security controls. Explain data security risks of unauthorized AI use, including the potential for breaches and regulatory consequences. Address patient safety concerns with AI-generated content, including the risk of inaccurate or harmful outputs. Present the governance policy and approval process clearly. Share the approved AI tools list and explain how to request new tools.

Make staff aware of risks without creating fear or resistance. Emphasize that governance exists to enable safe innovation and protect both patients and staff.

Step 7: Monitor and Enforce

Establish ongoing monitoring to detect new Shadow AI adoption. Conduct regular IT audits to identify unauthorized software and cloud services. Survey staff periodically to ask what tools are being used. Track vendor outreach to understand which AI vendors are contacting staff directly. Encourage incident reporting so staff feel comfortable reporting AI issues or concerns.

Enforce the governance policy consistently but fairly. Recognize that staff who adopted Shadow AI before the policy existed had good intentions. Focus on remediation and education rather than punishment.

The Balance: Enabling Innovation While Preventing Risk

The goal of AI governance is not to slow innovation. It is to enable safe innovation.

Healthcare organizations need artificial intelligence to improve patient access, reduce operational costs, address staff burnout, and remain competitive. But they also need to protect patient data, ensure compliance, maintain patient safety, and preserve trust.

Effective AI governance balances innovation and risk. It enables experimentation by making it easy to try new AI tools in controlled environments with proper safeguards. It prevents chaos by ensuring AI adoption is coordinated, strategic, and aligned with organizational goals. It protects patients by ensuring AI tools meet safety, compliance, and quality standards before deployment. It builds trust by demonstrating that AI is being adopted responsibly and transparently.

The alternative—prohibition or inaction—leads to Shadow AI proliferation and uncontrolled risk. Organizations that ban AI without providing approved alternatives will see staff adopt tools anyway. Organizations that ignore AI governance will face compliance violations, security breaches, and patient safety incidents.

Governance before automation.

Case Study: How One Health System Addressed Shadow AI

The Discovery

A large health system with seven hospitals and more than 800,000 annual patient encounters conducted a routine IT audit as part of their annual security assessment. The audit discovered 15 AI tools being used across the organization without formal approval or oversight.

The tools ranged from ChatGPT being used by contact center agents to draft patient communications, to ambient documentation tools being used by physicians to transcribe encounters, to AI scheduling assistants being used by administrative staff to optimize appointment calendars, to generative AI being used by marketing to create social media content.

The CMIO was alarmed. None of the tools had Business Associate Agreements. None had been reviewed for HIPAA compliance. None were integrated with the EHR system. Each tool represented potential compliance violations, security vulnerabilities, and patient safety risks.

The Response

The health system took a structured approach to address Shadow AI over 90 days.

Week 1-2: Shadow AI Discovery and Risk Assessment

The IT and compliance teams conducted a comprehensive Shadow AI discovery. They surveyed staff across all departments to identify AI tools in use. They reviewed IT logs and network traffic to detect unauthorized cloud services. They interviewed department leaders to understand adoption patterns.

They created an inventory of 15 Shadow AI tools and assessed each for risk level. Three tools were classified as high risk due to HIPAA violations involving patient data processing without BAAs. Five tools were classified as medium risk due to security concerns or integration issues. Seven tools were classified as low risk but still required formal approval and oversight.

Week 3-4: Immediate Risk Mitigation

The health system took immediate action on high-risk tools. They discontinued three tools immediately that were processing patient data in violation of HIPAA. They notified affected staff and provided alternative approved tools. They conducted risk assessments to determine whether breach notification was required.

For medium-risk tools, they negotiated Business Associate Agreements with five vendors whose tools had value but lacked proper compliance documentation. They worked with IT to establish security controls and monitoring.

For low-risk tools, they identified approved alternatives for seven tools that could be replaced with enterprise solutions offering better integration and support.

Month 2: Governance Framework Development

The health system established a comprehensive AI governance framework. They created an AI governance policy defining evaluation criteria, approval processes, and accountability. They formed an AI steering committee including the CMIO, CIO, compliance officer, and operations leader. They developed AI tool evaluation criteria covering compliance, security, integration, vendor stability, and patient safety. They streamlined the approval process to achieve a two-week review timeline instead of the previous months-long process.

Month 3: Communication, Training, and Enablement

The health system communicated the governance policy to all staff through multiple channels including email, intranet, department meetings, and training sessions. They conducted training on AI risks, HIPAA requirements, and the approval process. They published an approved AI tools list on the intranet with clear guidance on appropriate use. They launched an AI innovation pilot program to enable safe experimentation with new AI tools in controlled environments.

The Outcome

After 90 days, the health system achieved significant results.

Shadow AI eliminated. All unauthorized tools were either discontinued, approved with proper safeguards, or replaced with approved alternatives. The organization had complete visibility into AI tool usage.

Compliance restored. All approved tools had Business Associate Agreements and met HIPAA requirements. Security controls and monitoring were established. No compliance violations remained.

Innovation enabled. Staff could request new AI tools with a two-week review timeline. The approved tools list provided immediate access to vetted solutions. The innovation pilot program enabled safe experimentation.

Risk reduced. No compliance violations occurred after governance implementation. No patient data breaches resulted from AI tools. Patient safety improved through proper oversight of AI-generated content.

Trust built. Staff understood that governance was about enabling safe innovation, not blocking progress. Adoption of approved AI tools increased because staff had confidence in their compliance and security.

The health system went from chaotic Shadow AI proliferation to intentional, governed AI adoption in 90 days.

Conclusion: Governance Before Automation

Artificial intelligence in healthcare offers tremendous promise. AI can improve diagnostic accuracy, accelerate drug discovery, enhance patient engagement, reduce administrative burden, and optimize operations. Healthcare organizations that adopt AI strategically will gain competitive advantages and improve patient outcomes.

But AI adoption without governance creates Shadow AI—unauthorized tools that proliferate across organizations, creating compliance violations, security vulnerabilities, patient safety risks, and operational chaos.

Shadow AI is not a technology problem. It is a governance problem. Healthcare organizations that lack formal AI governance policies will continue to see unauthorized AI adoption regardless of the risks.

The solution is not to ban AI. It is to build governance frameworks that enable safe experimentation while preventing risk.

Effective AI governance includes clear policies defining what AI is and how it is approved, Shadow AI discovery to identify unauthorized tools and assess risk, risk assessment and remediation for existing tools, approved AI tools lists for common use cases, streamlined approval processes for new tools, staff education on AI risks and governance requirements, and ongoing monitoring and enforcement to detect new Shadow AI.

Governance before automation.

By establishing governance frameworks, healthcare organizations can harness the benefits of artificial intelligence in healthcare—improved patient access, reduced operational costs, enhanced clinical outcomes, and competitive advantage—while protecting patient data, ensuring compliance, maintaining patient safety, and preserving trust.

The alternative is Shadow AI proliferation, uncontrolled risk, and inevitable failures that undermine the promise of AI in healthcare.

Next Steps

If your organization is concerned about Shadow AI, consider these actions:

Conduct a Shadow AI discovery. Survey staff, review IT logs, and interview department leaders to identify unauthorized AI tools currently in use across your organization.

Assess risk. Evaluate each tool for compliance with HIPAA, security vulnerabilities, integration issues, and patient safety concerns. Prioritize remediation based on risk level.

Establish governance. Create an AI governance policy, define approval processes, form an AI steering committee, and establish accountability for AI oversight.

Communicate and educate. Train staff on AI risks, HIPAA requirements, and governance policies. Make the approval process clear and accessible.

Enable safe innovation. Create an approved AI tools list, streamline the approval process, and establish innovation pilot programs for safe experimentation.

Need help? AuthenTech AI specializes in AI governance and readiness assessment for healthcare organizations. Our AI Adoption Health Check includes Shadow AI discovery, risk assessment, governance framework development, and implementation support.

Contact us: Visit authentech.ai or email sales@authentech.ai to learn more.